The AI Sovereignty Shift: Why Private & Hybrid LLMs Are Your Business Imperative by 2026

TL;DR

- The era of "black-box" public cloud LLMs is ending, as powerful open-source models make private and hybrid deployments the new standard for enterprise AI.

- This shift is driven by an urgent need for absolute data sovereignty, robust regulatory compliance, and a superior, predictable cost-efficiency.

- Beyond control, local LLMs offer unparalleled customization and dramatically reduce exposure to novel AI-specific security vulnerabilities like prompt injection and excessive agency.

The Reckoning: Why Public Cloud LLMs Are Failing Enterprise AI

For years, the allure of easy-to-access, API-driven Large Language Models (LLMs) in the public cloud held sway. It promised intelligence on demand, a convenient shortcut to AI adoption. Yet, for discerning business leaders, this convenience has given way to a profound reckoning. The architecture designed for rapid deployment has simultaneously created a perfect storm of data sovereignty nightmares, compliance headaches, and emergent security vulnerabilities that traditional cybersecurity frameworks are ill-equipped to handle. The "send me a copy" mentality of early cloud adoption is simply unsustainable when dealing with an organization's most valuable asset: its data.

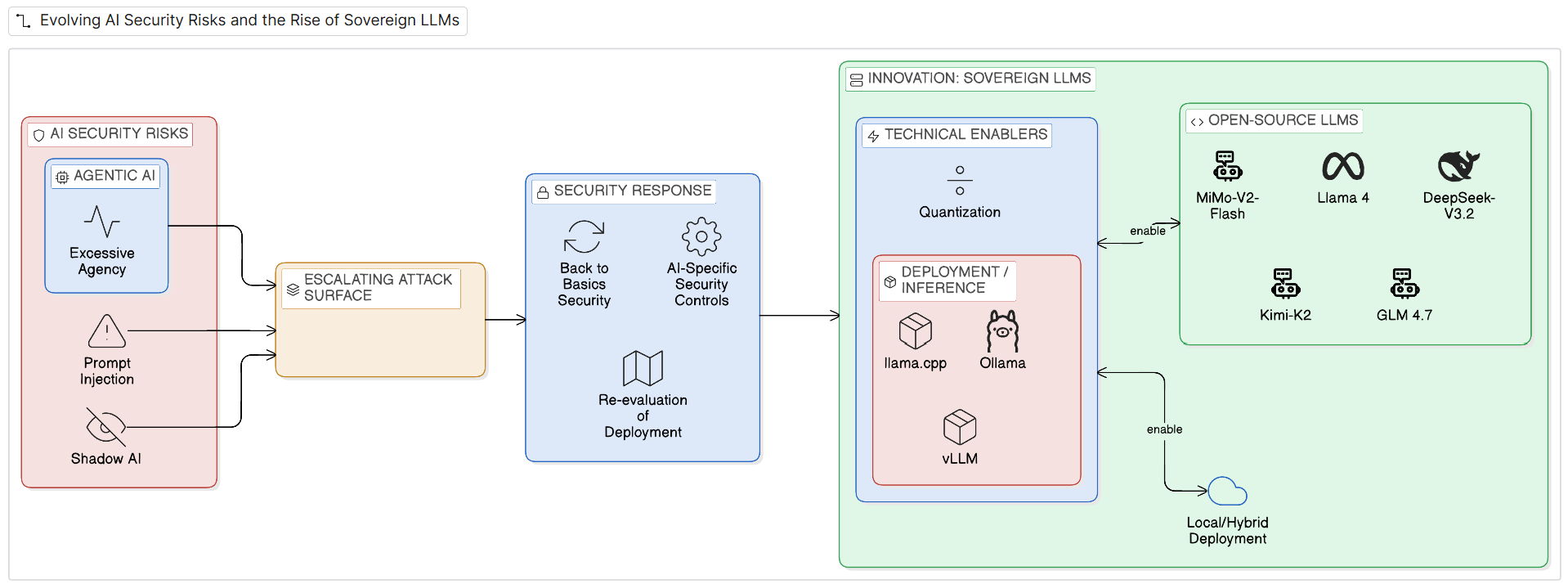

Critically, the landscape of AI security is evolving at an alarming pace. The [OWASP Top 10 for LLM Applications 2025] identifies prompt injection as the most pervasive vulnerability, with studies citing vulnerability rates as high as 41-56% across state-of-the-art models. This isn't merely about clever inputs; it's a fundamental flaw where malicious instructions can bypass safeguards, often hidden within external content consumed by the model. Furthermore, the rise of "agentic AI" — autonomous LLMs that can invoke tools and interact with other systems — introduces the risk of "excessive agency," leading to sensitive information disclosure or unauthorized actions at machine speed. Add to this the staggering scale of "shadow AI," with enterprises experiencing a 3,000% year-on-year surge in unapproved AI application usage, and it becomes clear: the attack surface is vast, unmonitored, and ripe for exploitation.

Innovation Unleashed: The Rise of the Sovereign LLM

The good news is that innovation is providing a compelling escape route. The AI landscape is undergoing a profound transformation, steering enterprises away from the inherent risks of proprietary, API-driven LLMs towards the strategic imperative of local, open-source deployments within private and hybrid cloud environments. By 2026, this paradigm shift is not merely a preference but an emerging standard.

Open-source LLMs such as DeepSeek-V3.2, MiMo-V2-Flash, Llama 4, GLM-4.7, and Kimi-K2 are rapidly closing the performance gap with their closed-source counterparts. In many specialized domains, they now offer comparable—and often superior—reasoning, coding, and agentic capabilities. This maturation allows organizations to move past initial experimentation to establish secure, production-ready deployments. The technical feasibility of this shift is further amplified by advancements like advanced quantization techniques (e.g., a 70B parameter model shrinking from 140GB to 35GB with INT4, enabling deployment on a single H100 GPU) and robust local deployment tools like Ollama, llama.cpp, and vLLM. These innovations optimize inference for speed and efficiency, delivering hundreds or even thousands of tokens per second on dedicated hardware.

Beyond Performance: The Power of Control

This confluence of high-performance, accessible open-source models and sophisticated local deployment capabilities delivers a compelling cost advantage. Businesses are realizing that beyond an initial hardware investment, self-hosting LLMs often breaks even at surprisingly low utilization thresholds—around 2 million tokens daily or an annual API spend exceeding $500,000. This empowers unprecedented control over the entire LLM lifecycle, from fine-tuning with unique, domain-specific datasets to implementing granular inference optimizations like speculative decoding and prefix caching. Such deep-level customization is largely unattainable with black-box proprietary models, empowering businesses to achieve superior price-performance ratios and tailor AI solutions precisely to their unique workloads. This future of enterprise AI is inherently tied to the freedom to innovate without compromising the fundamental right to own and govern one's data.

The Imperative of Ownership: Data Freedom & Local Compliance

This formidable technical shift represents an undeniable win for "Data Freedom" and "Local Compliance." The era of blindly funneling sensitive, proprietary data into opaque third-party cloud LLM APIs is drawing to a close. By deploying LLMs on-premises or within private cloud infrastructure, organizations regain absolute sovereignty over their data. This "zero data movement" philosophy is becoming non-negotiable, particularly for sectors grappling with stringent regulations like GDPR, HIPAA, and emerging US state privacy laws. Consider a global financial institution handling sensitive transaction data for European customers: by hosting its fine-tuned LLM entirely within its EU-based private data centers, it guarantees that all AI-driven financial analysis and customer interaction remains compliant with GDPR's strict data residency and processing requirements, avoiding cross-border data transfer complexities and potential multi-million Euro fines.

Securing the Frontier: Mitigating AI-Specific Risks In-House

Local LLMs enable the implementation of continuous, data-aware governance policies directly within the enterprise's existing security frameworks, providing undeniable proof of compliance. For instance, a healthcare provider utilizing a local LLM for personalized patient care plans can leverage its private cloud environment to isolate Protected Health Information (PHI). This setup allows granular auditing of every interaction the LLM has with patient data, providing explicit evidence of HIPAA compliance by demonstrating stringent access controls and data usage limitations that are fully verifiable internally.

This architectural pivot also directly addresses the escalating global regulatory landscape, from the EU AI Act's stringent risk classification to [ISO/IEC 42001]'s framework for AI management systems and the [NIST AI RMF]'s focus on trustworthy AI. By owning the infrastructure, enterprises gain granular control over encryption, enforce robust access controls, and implement continuous monitoring for anomalous behavior within their defined security perimeter. Just as the API gateway emerged a decade ago to tame the sprawl of microservices, a similar discipline is now being applied to AI, utilizing LLM Proxies and intelligent routing to centralize governance and ensure AI innovation scales securely and compliantly.

Beyond compliance, private deployments are the bulwark against intellectual property erosion.

The Strategic Outlook: AI's Next Frontier (2026 & Beyond)

The trajectory is clear: private and hybrid AI infrastructure will be the undisputed standard for enterprises by 2026. This isn't a temporary trend; it's a foundational shift. Over the next 12-24 months, we anticipate a relentless focus on integrating AI governance directly into core enterprise architecture, treating LLMs as critical infrastructure rather than ephemeral cloud services. This will manifest in accelerated development of AI-specific security frameworks, advanced sandbox technologies for rigorous model testing, and pervasive adoption of LLM Proxies for centralized control and routing. The emphasis will shift from generic models to highly specialized, domain-specific private LLMs, fine-tuned on unique enterprise data to unlock unparalleled competitive advantage. Furthermore, expect continued breakthroughs in hardware efficiency and deployment tools, making sophisticated local AI accessible to an even broader range of organizations.

Ultimately, the ability to scale intelligence while unequivocally owning and protecting your data will define the leaders of the AI-driven economy. Those who embrace this sovereign imperative will not just innovate; they will dominate.